Knowledge is Cheap. Intelligence isn't.

Why Yann LeCun thinks a cat might be smarter than ChatGPT.

Today’s frontier language models can pass simulated bar exams, write functional code, explain general relativity in three languages, and summarize a hundred-page report in a paragraph. A house cat cannot do any of these things. The cat cannot read. The cat cannot do arithmetic. The cat does not know what general relativity is and would not care to learn.

The cat, however, can do something else.

It can look at the gap between the couch and the bookshelf, plan the jump, notice the shelf is stacked with paperbacks that might shift, abandon the plan, and find a different route up. It knows that knocking the lamp off the side table will make a noise, and that the noise will bring you running and shouting. It has noticed you moved the food bowl, and its map of the kitchen has already updated. The cat will never write an essay. But it is doing something language models still do not reliably do: building a working model of a physical world they can act inside, and using that model to choose what to do next.

Until recently, that was a tidy division of labor. Language models did the reading and the explaining. Animals, including us, did the predicting and the acting. The recent agentic shift is closing that gap. We are asking models to do the cat’s kind of work too. We have started calling all of it intelligence. Some of the people who got us here think we are making a mistake.

The cat’s kind of problem

A coding agent fixing a production bug at 2 a.m. has to model the codebase well enough to predict what one change will break, and rework the model when something else does. A customer support agent reading an angry email has to figure out what the customer actually wants, predict whether a refund or an apology will get them there, and rethink it all when the next message is angrier. A research agent reading three hundred papers has to map the shape of the field, predict which gap is the one worth pursuing, and rebuild the map when the first thread dies. None of these are retrieval problems. You cannot Google your way to the right answer. They are predict-and-act problems, the cat’s kind of problem, transposed into domains with no couches and no bookshelves.

Today’s language models are much better at one of these halves than the other. On the read-and-recall side, the bar-exam side, they are astonishingly capable, even if not perfectly reliable. On the predict-and-act side, the cat’s side, they are inconsistent: sometimes they get it right, sometimes they confidently get it wrong. Every answer, right or wrong, comes back as fluent, confident-sounding text. It is hard to tell from the output alone when the model has the right answer and when it has only produced something that sounds right.

The language we have for talking about it does not help either. We use one word, intelligence, for both kinds of work. Yann LeCun thinks that single word is the whole problem.

He thinks the cat’s skill is what the word actually means. He thinks today’s language models’ skill is something else, something real and useful and worth a trillion dollars, but not the thing we have been calling it. In the sense that matters, he says, the cat is smarter than ChatGPT.

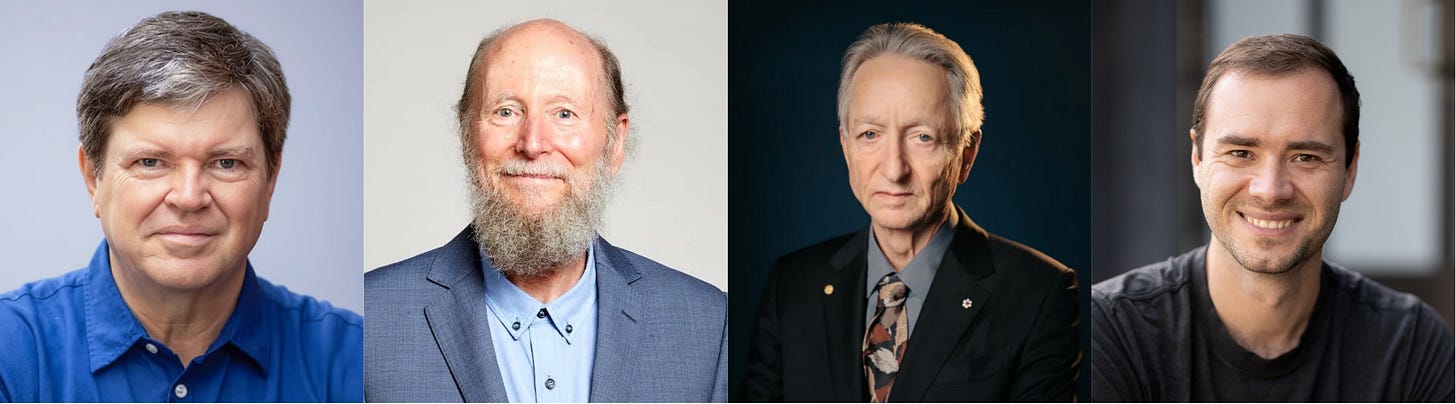

This is not a hot take from a guy with a podcast. LeCun won the Turing Award, co-founded Facebook AI Research in 2013, and remained one of Meta’s most important AI figures for more than a decade. By late 2025, as Meta deepened its bet on language models, he announced he was leaving to build something else. In March 2026, that something became AMI Labs: a new company with a billion-dollar seed round and a founding wager that LLMs are the wrong road to machines that truly understand, reason, and plan. Nearly every major AI company is pouring capital into the paradigm he just walked away from.

His argument compresses to a single sentence, and he has been repeating it in interview after interview:

Do not confuse the superhuman knowledge accumulation and retrieval abilities of current language models with actual intelligence.

If he is right, what have we actually been racing toward? And what did we ever mean by the word we have been calling it?

Three more answers. One shared warning.

LeCun is not alone in thinking AI has been measuring the wrong thing. The more surprising version of that argument comes from Richard Sutton.

Sutton is the theorist of the scaling era. His 2019 essay “The Bitter Lesson” became scripture inside the field. Its argument was simple. Across seventy years of AI research, methods that scale with computation have always beaten methods that try to encode human cleverness. Researchers kept reaching for elegance. They kept getting outrun by brute force. Sutton told them to stop fighting it.

Much of the frontier AI industry spent the next six years treating that lesson as permission to scale. They scaled compute. They scaled data. They scaled parameters. They built the LLMs.

In September 2025, Sutton sat down with Dwarkesh Patel and said he thinks the field misread him. LLMs, he argued, are not the clean case of the bitter lesson many people think they are. They are a clever shortcut dressed up to look like brute force. They lean on a colossal amount of human knowledge, the entire scraped internet, and they do not learn from experience. A squirrel learns to crack a nut by trying. An LLM is shown a billion sentences about nuts.

Only one of them is learning.

Sutton thinks intelligence is the word for that one, and the field has spent six years stretching it to cover the other.

His objection sits next to LeCun’s, pointing at the same problem from a different angle. LeCun says LLMs do not model the world. Sutton says they cannot learn from acting in it. Both want the same next architecture: an agent that meets a world, predicts what will happen, finds out, and updates. The strange part is that this is not exotic at all. A child does it every day. Children, Sutton argues, are not primarily imitators. They are experimenters.

Two of the field’s most senior voices, then, are saying the current road is wrong. Not everyone agrees.

Geoffrey Hinton thinks the gap we just drew between cat-skill and LLM-skill is not really there.

Hinton shared the 2018 Turing Award with LeCun and Yoshua Bengio, then shared the 2024 Nobel Prize in physics with John Hopfield. Few people on earth have spent more time studying how brains and neural networks resemble each other. His claim, made in interviews with LBC and elsewhere, is that today’s AI systems are much closer to doing what the cat does than LeCun allows. They do not merely parrot text. They understand, in a real sense. Some may already have something close to subjective experience. We refuse to see it, he says, because their substrate looks nothing like ours.

His argument is an old philosophical thought experiment about consciousness. Take one neuron in your brain and replace it with a silicon circuit that does what the neuron did: fires when its inputs fire, stays quiet when they don’t. Same behavior, same outputs. Are you still conscious? Almost everyone says yes. Brains lose individual neurons all the time, and nobody loses their mind one cell at a time. Now replace a second. A third. Continue until your skull is full of silicon. There is no point in this process where you can say “this is the swap that took my consciousness away.” If every step preserves you, the endpoint must too. A skull full of silicon is still a conscious mind.

Hinton’s move is to push this one step further. The neural networks behind modern AI are built from the same kind of primitives the thought experiment ends with: simple units that take inputs, fire or stay quiet, pass signals on. If silicon can host a mind in principle, the systems we already have may be hosting one in practice.

If he is right, the divide we drew earlier between the cat and the LLM doesn’t exist. The cat models the world. The LLM does too. We just refuse to see it because the LLM doesn’t look like anything that has ever had a mind before.

Andrej Karpathy thinks both sides of that argument are missing the point.

Karpathy is the practitioner of the four. He was a founding member of OpenAI, later directed AI at Tesla, and has unusually deep hands-on experience with frontier models and AI systems. When he writes about what LLMs are, he is reporting what he has watched them do, not arguing from a framework about what they should be. In his 2025 year-in-review essay he offered two metaphors that capture the strangeness of the field.

The first is jagged intelligence. Specialized human ability rests on a foundation of basic skills everyone has. A graduate physicist can count the letters in a word. So can anyone. The hard skill sits on top of a floor that comes with being a person. LLMs do not have that floor. A model will solve a graduate-level physics problem and then fail to count the letters in the word strawberry. The hard task was easy for it. The easy task was not. You cannot guess what it will fail at next.

The second metaphor is the one worth sitting with. LLMs, Karpathy writes, are not animals. “They are summoned ghosts”.

Animals, including us, were shaped over hundreds of millions of years by the pressure of staying alive. LLMs were shaped over months by being shown an internet’s worth of text and rewarded for sounding right. We call both intelligent. That is the only thing they share. Asking whether one is smarter than the other, Karpathy suggests, is a little like asking whether a submarine swims faster than a fish. The submarine moves through water. The fish swims. They are doing different things that happen to look similar from a distance. You cannot rank them on the same scale, because the scale was built for one of them.

Four voices. Three positions.

Sutton and LeCun say LLMs are a clever distraction from the real problem. Hinton says they are the real thing, already arrived, and we have not noticed. Karpathy says the question of whether they match us is the wrong question, because they are a category of their own.

Notice what these positions have in common.

None of them agrees with how the public conversation about AI sounds. “Smarter than humans” has become a marketing line. “AGI in two years” has become a fundraising pitch. Four senior voices in the field, from four different vantage points, are saying the same thing in different words: the question is more complicated than that, and the word you are using does not mean what you think it means.

To get anywhere useful, the disagreement needs a structure to live inside. There is one. It is older than this debate.

Three things we keep calling intelligence

For most of history, these three abilities were inseparable. A mind that had one always had the other two. So we used one word for them. Now they are coming apart, and the word is breaking with them.

The first is knowing. The capacity to store, retrieve, and recombine what has been said and written. A model trained on the internet can produce a sentence on any topic, in any style, in seconds. LLMs are superhuman at this. All four thinkers grant them this much. The disagreement is whether anything else is happening.

The second is understanding. The capacity to hold a working model of how things actually work, and to predict what will happen next when you act inside the world. The cat understands its kitchen. The pilot understands her airplane. The doctor understands the body she is treating. Understanding lets you recover when the world surprises you. This is what the four voices fought over. LeCun and Sutton say LLMs do not have it. Hinton says they do. Karpathy says the question is malformed.

The third is wanting. The capacity to care that one outcome happens rather than another. A cat wants its food. A doctor wants her patient to live. A novelist wants the sentence to be true. Wanting is not strictly intelligence. It is what makes intelligence reach. Without it, knowing and understanding just sit there. Humans have been trying to name this for thousands of years. Philosophers from Aristotle to Descartes circled it. Religions and poets across cultures gave it many names: soul, will, spirit, heart. None of the words quite stuck. We never had to settle on one, because no mind ever had the other two without it. Even Hinton’s strongest claims are about consciousness, awareness, and self-preservation, not about the biological wanting we see in hunger, fear, attachment, or survival.

Three abilities. One word. We have been building a system that is superhuman at the first, disputed on the second, and absent from the third. We have been calling all of it intelligence.

The cat clearly has the second and third. The LLM clearly has the first. Whether it has the second is exactly the fight.

What is actually rare

Knowledge is becoming cheap. Understanding is being contested in labs, on stages, and in billion-dollar bets. Wanting remains where it has always been, in the things that are alive.

Somewhere right now, a cat is watching the food bowl, listening for footsteps, deciding whether the lamp is worth knocking over to get attention. The cat does not know what it is doing or why. But in that one kitchen moment, it is doing all three things at once. It knows where the bowl is. It understands what knocking the lamp will set in motion. It cares which outcome arrives.

We have built machines that can do the first better than any mind that has ever lived. Whether they can do the second is the argument four of the field’s most senior voices cannot agree on. The third has not entered the conversation.

Maybe what we are building is not a smarter mind but a stranger one, a category we do not yet have a name for. The cat keeps watching the bowl. The machines keep telling us almost anything we ask. Somewhere between them, the word we used for both is quietly coming apart.

Further reading

Yann LeCun on walking out of Meta (Fortune, December 2025) and the AMI Labs $1.03 billion seed (TechCrunch, March 2026). For the cat framing: Christopher Mims, “This AI Pioneer Thinks AI Is Dumber Than a Cat”, Wall Street Journal, October 2024.

Andrej Karpathy, 2025 LLM Year in Review and Animals vs Ghosts, karpathy.bearblog.dev.

Geoffrey Hinton, interview with Andrew Marr on LBC.

Richard Sutton, The Bitter Lesson (2019). Dwarkesh Patel interview with Sutton, September 2025.

Houda Nait El Barj, What Becomes Valuable When Intelligence Is Cheap?, Substack, April 2026. A related argument from a different angle.